I’m doing some performance testing with CouchDB and jcouchdb and I wanted to know if I should write binary data using a bytearray or as a base64 encoded string. The latter is definitely the correct answer. I initially tried using couchdb4j, but I found that it’s exception handling is flawed, or well, doesn’t exist. So I dropped that after a day of tinkering with it. I’ve since been writing a performance testing tool in Java to reuse some of the code in a Java product we have when I’m satisfied with the results. You can find the source that produced these numbers on github for now. I’ve got some more tests to add, and will spend some time thinking about where to put the final tool.

I’m doing some performance testing with CouchDB and jcouchdb and I wanted to know if I should write binary data using a bytearray or as a base64 encoded string. The latter is definitely the correct answer. I initially tried using couchdb4j, but I found that it’s exception handling is flawed, or well, doesn’t exist. So I dropped that after a day of tinkering with it. I’ve since been writing a performance testing tool in Java to reuse some of the code in a Java product we have when I’m satisfied with the results. You can find the source that produced these numbers on github for now. I’ve got some more tests to add, and will spend some time thinking about where to put the final tool.

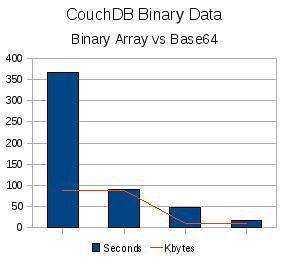

I’m using couchdb 0.8.0-1 as installed out of the box on Ubuntu 8.10 from the package. The graph on the left (which I quickly made in OO and is terrible) is the result of four total runs. Each run was ten threads each writing one hundred documents. The first two runs are writing a binary array and then a base64 encoded string of an 88k image, then again with a 9.5k image. The base64 runs include the time it took to encode the file, but the binary array runs took three times longer to complete. Futon also hates displaying the binary array data.

I’ll be adding another method to test reads since that’s what we’ll be doing primarily. I want to test the concurrency on the reads, then compare those numbers to the results of running multiple couchdb nods behind nginx to ensure the overhead is low and performance really increases. I know Tim Dysinger has been doing some testing and that he and other folks from #couchdb on irc.freenode.net are going to test some pretty large clusters, so it will be interesting to see how our numbers compare.

The number of threads changes the results quite a bit. Tuning may make significant difference or none at all. The one hundred iterations of the 9.5k image takes

number of threads:[base64 seconds, bytearray seconds]

1:[2,5]

5:[8, 21]

20:[34, 90]

I’ll let make another post next week after more testing is done.